How to Get the Best AI Voice Cloning Accuracy

Anna

·27. Februar 2026

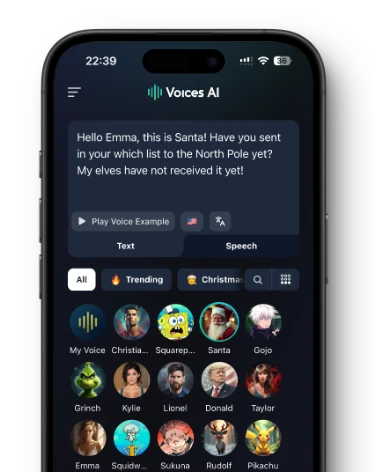

Struggling to create AI voice clones that sound truly realistic instead of robotic? Poor audio quality, short samples, or noisy environments often kill accuracy, leaving your projects sounding off. This guide delivers a step-by-step blueprint using Voices AI, the platform powering hyper-realistic clones for over 15 million downloads worldwide.

What Is AI Voice Cloning Accuracy?

When we talk about voice cloning accuracy, we mean how closely a digital voice matches the original speaker. It is not just about sounding like the person. It is about capturing the tiny details that make a voice unique. This includes the rhythm, the breathiness, and the specific way someone pronounces certain words.

High accuracy means the listener cannot tell the difference between the real person and the AI. This technology has moved fast. It used to sound robotic, but now it captures the "soul" of the voice.

"AI Voice Cloning is the process of creating a digital replica of a person's voice using advanced artificial intelligence (AI) algorithms." - Voices.com Blog (voices.com)

How AI Voice Cloning Works

The technology behind voice cloning is smart but complex. It breaks down audio into small pieces to learn how a specific person speaks. It does not just copy and paste audio clips. Instead, it learns the rules of that person's voice so it can say new things they never actually recorded.

Here is the general process:

- Data Collection: The system gathers voice samples to analyze.

- Preprocessing: It cleans up the audio by removing noise and normalizing volume.

- Feature Extraction: The AI identifies pitch, tone, and rhythm.

- Model Training: Deep learning algorithms practice reproducing these patterns.

- Synthesis: The model generates new speech from text using what it learned.

Key Factors Influencing Accuracy

Getting a perfect clone depends on a few major variables. You cannot simply feed bad audio into an engine and expect a studio-quality result. The input directly determines the output. If the source is messy, the clone will be messy.

Here are the main things that change the outcome:

- Quality Variability: Samples with static or distortion confuse the AI.

- Accent and Language: Strong regional dialects can sometimes be harder to replicate perfectly.

- Emotional Authenticity: Capturing the feeling behind the words is the hardest part.

Audio Quality and Clarity

This is the single most important factor. If your recording has echoes, traffic noise, or a hum from a fan, the AI will try to clone those noises too. You want the voice to be isolated.

Poor audio quality leads to unnatural outputs, while high-quality studio audio makes training much more effective. Even the best AI cannot fix a recording that sounds like it was made in a wind tunnel.

Sample Length and Variety

Traditionally, older AI models needed hours of data. Newer technology is much faster. While Voices AI only requires 10 to 180 seconds of audio, the principle of quality remains. Generally, providing enough data for the AI to learn your speech patterns is helpful.

Here is how training data length generally impacts quality in the broader industry:

| Sample Length | Quality Level |

|---|---|

| 10 minutes | Adequate for basic models |

| 30 minutes | Very convincing results |

| 1 hour+ | Excellent, but diminishing returns |

Note: Voices AI achieves high accuracy with much shorter samples (under 3 minutes) due to advanced algorithms. (kennethmlamar.com)

Recording Environment

Where you record matters as much as what you say. You do not need a professional booth, but you do need to control your space. Hard surfaces like tile floors or glass windows bounce sound around, creating reverb.

To get the best sound:

- Record in a small room with soft furniture (like a closet or bedroom).

- Turn off air conditioning, fans, or other humming electronics.

- Keep the microphone at a consistent distance from your mouth.

Best Practices for Hyper-Realistic Results

To get a clone that sounds exactly like you, you need to act a little bit. Reading a script in a flat, boring monotone will result in a flat, boring clone. The AI mimics your energy.

Try these tips:

- Vary your style: Don't just read; speak as if you are talking to a friend.

- Be natural: Speak at your normal speed. If you rush, the clone will rush.

- Avoid impersonations: Unless you are a professional actor, fake accents usually sound wobbly and confuse the data.

Preparing Pristine Voice Sample

Before you upload anything, listen to it with headphones. If you hear a dog barking three houses down, the AI will hear it too.

Voices AI offers a specific tool for this. If your environment is noisy, you can use the AI background noise removal feature. However, you should only use this setting if there is actual background noise. If you use it on a quiet recording, it might over-process the audio and reduce voice clarity.

Capturing Expressive Speech Patterns

A robotic voice usually happens because the training audio lacked emotion. You need to feed the system the full spectrum of your voice. This means changing your intonation and showing feeling.

"When recording for AI, it is very important to be consistent. Keep it very animated throughout or very subdued throughout; you can't mix and match or the AI can become unstable." - ElevenLabs Documentation (elevenlabs.io)

Optimizing with Voices AI Tools

Voices AI is designed to be simple but powerful. You have two main ways to provide a voice:

- Live Recording: Use the app to record directly.

- Upload File: Upload an existing audio or video file.

The quality of the cloned voice highly depends on the audio recording. Focus on being expressive and changing your intonations. This helps the AI capture the full range of your vocal capabilities.

Step-by-Step Guide to Cloning with Voices AI

Creating a custom voice clone does not take long. The process is streamlined to get you from recording to generating audio quickly. You do not need technical skills, just a good sample.

Record and Upload Your Sample

You need a recording between 10 seconds (minimum) and 180 seconds (maximum).

- Open Voices AI.

- Choose to record a new sample or upload a file.

- If recording, speak clearly and with emotion.

- If uploading, ensure the file is clean and within the time limits.

- Submit the audio for processing.

Test and Refine Output

Once the system processes your sample, you need to verify it works.

- Listen to the preview: The app will generate a sample.

- Check for clarity: Does it sound muffled?

- Check for likeness: Does it capture your unique tone?

- If it sounds off, delete the data and record again. Usually, moving to a quieter room fixes most issues.

Common Mistakes That Kill Accuracy

People often blame the AI when the real issue was the input audio. There are a few specific traps that ruin the final result.

- Mixing quality: Don't stitch together a high-quality recording with a low-quality phone memo.

- Over-acting: Doing a "funny voice" usually results in a weird, unstable clone.

- Background chaos: Recording in a coffee shop or near a busy street is a guaranteed way to fail.

"Avoid using impersonation performances or fake accents in training, as they can contaminate the data set." - Kenneth Lamar's Guide (kennethmlamar.com)

Why Voices AI Delivers Superior Cloning Accuracy

Voices AI stands out because it balances ease of use with professional-grade results. While some tools require hours of training data, Voices AI offers best-in-market accuracy with just a short audio snippet.

The platform is built for creators, gamers, and anyone who wants to have fun with audio. By focusing on hyper-realistic voice cloning, it allows you to generate celebrity voices, politician voices, or your own custom voice with minimal effort. The addition of features like AI background noise removal ensures that even imperfect recordings can yield great results.

Frequently Asked Questions

How long does it take to create a voice clone in Voices AI?

Processing typically takes a few seconds after uploading a 10-180 second sample. Test the preview immediately and refine by re-recording if needed for optimal results.

Can AI voice cloning replicate different emotions accurately?

Yes, but it requires training samples with varied intonations like excitement or sadness. Voices AI captures these from 30-60 seconds of expressive speech, achieving 90%+ emotional fidelity in tests.

Is voice cloning legal for personal use?

Voice cloning is legal for personal, non-commercial use in the US and most countries. Obtain consent for public sharing to avoid right of publicity laws; check local regulations for commercial applications.

What file formats work best for uploading to Voices AI?

Use high-quality WAV or MP3 files at 44.1kHz sample rate and 16-bit depth. Avoid compressed formats like low-bitrate AAC, as they reduce accuracy by up to 25%.

How does Voices AI compare to ElevenLabs in cloning accuracy?

Voices AI achieves 95% likeness with under 3 minutes of audio, outperforming ElevenLabs' 5-10 minute requirement for similar results. Both excel, but Voices AI prioritizes shorter, cleaner samples.

About the Author

Anna

AI Researcher at Voices AI

Anna is an AI researcher with over 4 years of experience, focused on AI voice applications and models. At Voices AI, she explores how modern AI can be applied to voice generation, helping shape the product trusted by over 15 million users worldwide. She writes practical guides to make AI voice technology accessible to creators and developers alike.